Ultimate guide to artificial intelligence in the enterprise

- The Tech Platform

- Nov 9, 2020

- 16 min read

AI in the enterprise will change how work is done, but companies must overcome several challenges to derive value from this powerful and rapidly evolving technology.

The application of artificial intelligence in the enterprise will profoundly change the way businesses work. Companies are beginning to incorporate AI into their business operations with the aim of saving money, boosting efficiency, generating insights and creating new markets.

There are AI-powered applications to enhance customer service, maximize sales, sharpen cybersecurity, optimize supply chains, free up workers from mundane tasks, improve existing products and point the way to new products. It is hard to think of an area in the enterprise where AI -- the simulation of human processes by machines, especially computer systems -- will not have a deep impact.

Enterprise leaders determined to use AI to improve their businesses and ensure a return on their investment, however, face big challenges on several fronts:

The domain of artificial intelligence is changing rapidly because of the tremendous amount of AI research being done. The world's biggest companies, research institutions and governments around the globe are supporting major research initiatives on AI.

There are a multitude of AI use cases: AI can be applied to any problem facing a company or to humankind writ large. In the COVID-19 outbreak, AI is playing an important role in the global effort to contain the spread, detect hotspots, improve patient care, identify therapies and develop vaccines. As we eventually emerge from the pandemic, analyst firm Forrester Research expects investment in AI-enabled hardware and software robotics to surge as companies strive to build resilience against other catastrophes.

To reap the value of AI, enterprise leaders must understand how AI works, where AI technologies can be easily used in their businesses and where they cannot -- a daunting proposition for the reasons cited above.

This wide-ranging guide to enterprise AI provides the building blocks for becoming an intelligent business consumer of artificial intelligence. It begins with a brief explanation of how AI works and the main types of AI. You will learn about the importance of AI to companies, including a discussion of AI's principal benefits and the technical and ethical risks it poses; current and potential AI use cases; the challenges of integrating AI applications into existing business processes; and some of the technological breakthroughs driving the field forward. Throughout the guide, we include hyperlinks to TechTarget articles that provide more detail and insights on the topics discussed.

How does AI work?

Many of the tasks done in the enterprise are not automatic but require a certain amount of intelligence. What characterizes intelligence, especially in the context of work, is not simple to pin down. Broadly defined, intelligence is the capacity to acquire knowledge and apply it to achieve an outcome: The action taken is related to the particulars of the situation rather than done by rote.

Getting a machine to perform in this manner is what is generally meant by artificial intelligence. But as AI experts take pains to state, there is no single or simple definition of AI. In a 2016 report by the National Science and Technology Council on preparing for the future of AI, the authors noted that definitions of AI vary from practitioner to practitioner.

"Some define AI loosely as a computerized system that exhibits behavior that is commonly thought of as requiring intelligence. Others define AI as a system capable of rationally solving complex problems or taking appropriate actions to achieve its goals in whatever real world circumstances it encounters," the report stated.

Moreover, what qualifies as an intelligent machine, the authors explained, is a moving target: A problem that is considered to require AI quickly becomes regarded as "routine data processing" once it is solved.

At a basic level, AI programming focuses on three cognitive skills: Learning, reasoning and self-correction.

The learning aspect of AI programming focuses on acquiring data and creating rules for how to turn data into actionable information. The rules, called algorithms, provide computing systems with step-by-step instructions on how to complete a specific task.

The reasoning aspect involves AI's ability to choose the most appropriate algorithm, among a set of algorithms, to use in a particular context.

The self-correction aspect focuses on AI's ability to progressively tune and improve a result until it achieves the desired goal.

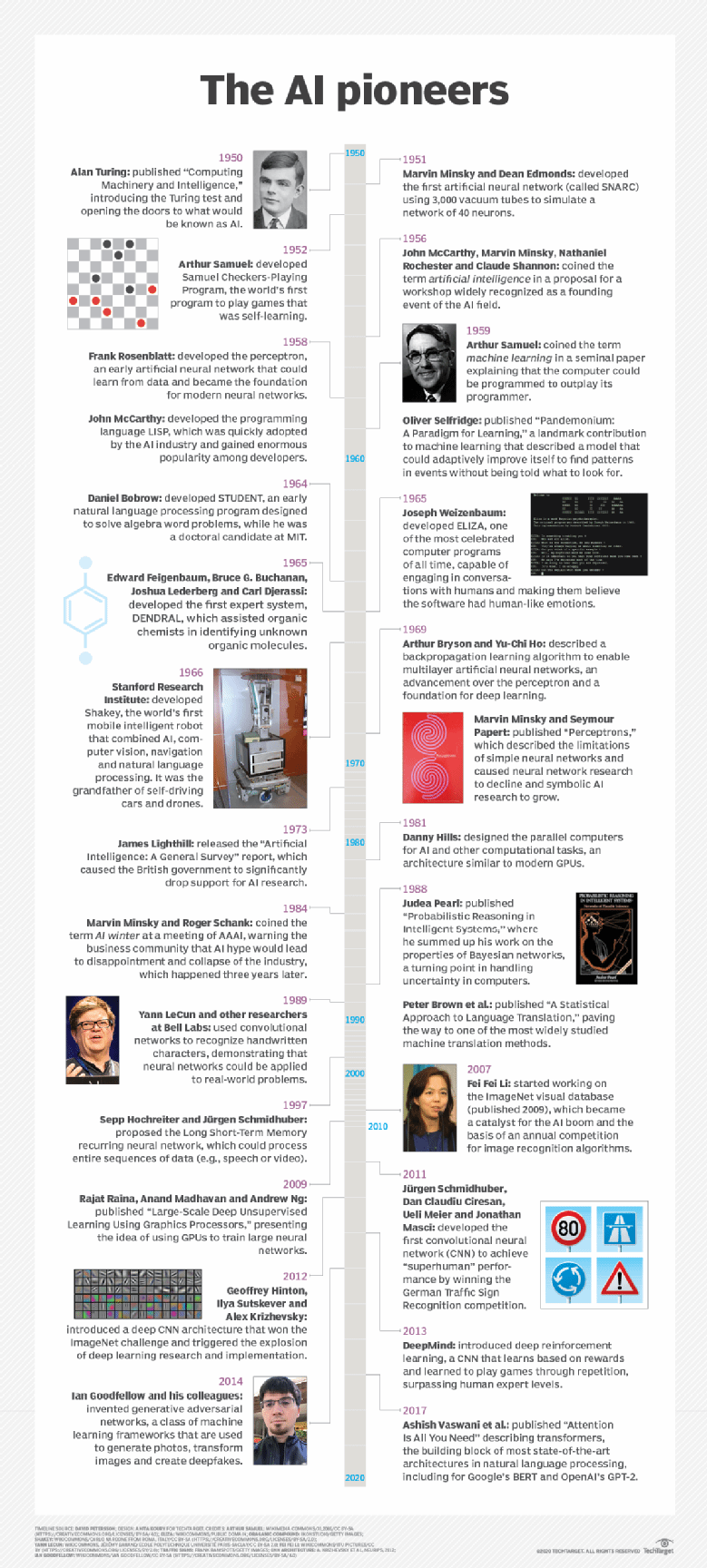

Evolution of AI: 4 types of AI

The concept of a machine with human-like intelligence dates to ancient times, represented in the metal automatons referred to in Greek myths, for example, and by the animatronic statues built by Egyptian engineers. Modern AI based on computer systems is generally cited as beginning in the mid-1950s when the term artificial intelligence was coined at a summer conference on the campus of Dartmouth College. Since then, AI's role in the enterprise has waxed and waned, experiencing two periods known as AI winters when funding and industry interest lagged. Despite these fallow periods, AI continued to evolve. Over the decades, computer scientists, mathematicians and experts in other fields have strived to advance the field either by improving the algorithms or the hardware.

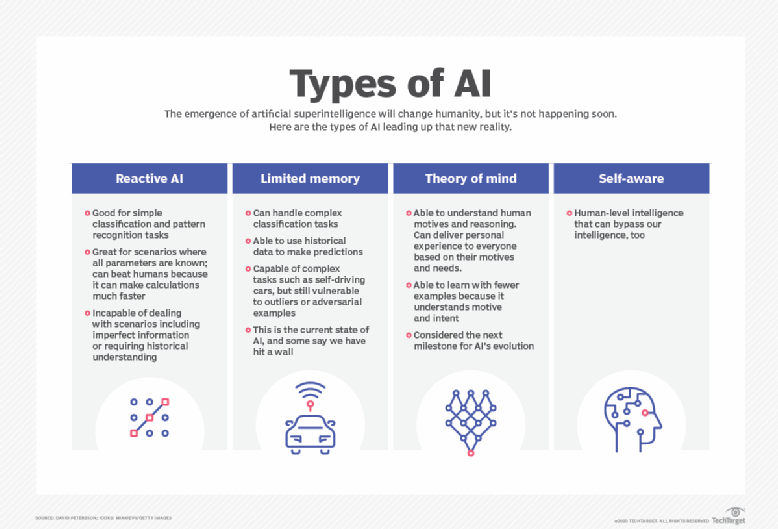

In his article "4 main types of AI explained," author David Petersson described how modern artificial intelligence evolved from AI systems capable of simple classification and pattern recognition tasks to systems capable of using historical data to make predictions. Propelled by a revolution in deep learning -- i.e., AI that learns from data -- machine intelligence has advanced rapidly in the 21st century, bringing us such breakthrough products as self-driving cars and virtual assistants Alexa and Siri. Although sentient robots are a fixture of popular culture, the type of AI that demonstrates human-level general intelligence and consciousness is a work-in-progress. Here are the four categories of AI outlined in Petersson's article and a summary of their characteristics:

Reactive AI. Algorithms used in this early type of AI lack memory and are reactive: That is, given a specific input, the output is always the same. Machine learning models using this type of AI are effective for simple classification and pattern recognition tasks. They can consider huge chunks of data and produce a seemingly intelligent output, but they are incapable of analyzing scenarios that include imperfect information or require historical understanding.

Limited memory machines. The underlying algorithms in limited memory machines are based on our understanding of how the human brain works and designed to imitate the way our neurons connect. This type of machine "deep learning" can handle complex classification tasks and use historical data to make predictions; it also is capable of complex tasks, such as autonomous driving. (See "AI vs. machine learning vs. deep learning: Key differences," for a clear explanation of three terms that are often used interchangeably but have important distinctions.)

Despite the ability to far outdo typical human performance in certain tasks limited memory machines lag behind human intelligence in other respects. They require huge amounts of training data to learn tasks humans learn with just a few examples, and they are vulnerable to outliers or adversarial examples. This type of "weak or narrow AI" reflects the current state of AI development. As Petersson noted, some believe the field may be hitting a wall.

Theory of mind. This type of as-yet-unrealized AI is defined as capable of understanding human motives and reasoning and therefore able to deliver personalized results based on an individual's motives and needs. Also referred to as artificial general intelligence (AGI), theory of mind AI can learn with fewer examples than limited memory machines; it can contextualize and generalize information, and extrapolate knowledge to a broad set of problems. Artificial emotional intelligence, or the ability to detect human emotions and empathize with people, is being developed, but the current systems do not exhibit theory of mind and are very far from self-awareness, the next milestone in the evolution of AI.

Self-aware AI aka artificial superintelligence. This type of AI is not only aware of the mental state of other entities but is also aware of itself. Self-aware AI, or artificial superintelligence (ASI), is defined as a machine with intelligence on par with human general intelligence and in principle capable of far surpassing human cognition by creating ever more intelligent versions of itself. Currently, however, we don't know enough about how the human brain is organized to build an artificial one that is as, or more, intelligent in a general sense

Why is AI important in the enterprise?

According to research firm IDC, by 2025, the volume of data generated worldwide will reach 175 zettabytes (that is, 175 billion terabytes), an astounding 430% increase over the 33 zettabytes of data produced by 2018. For companies committed to data-driven decision-making, the surge in data will be a boon. Large data sets are the raw material for the vital in-depth analysis that drives improvements in existing business operations and leads to new lines of business.

Companies cannot capitalize on these data stores, however, without AI. As writer Mary K. Pratt reported in her article "Importance of AI in the business quest for data-driven operations," AI and big data play a symbiotic role in 21st century business success: Deep learning, a subset of AI, processes large data stores to identify the subtle patterns and correlations in big data that can give companies a competitive edge; simultaneously, AI's ability to make meaningful predictions -- to get at the truth of a matter rather than mimic human biases -- requires high-quality data and usually vast amounts of it.

AI's importance to 21st century business has been compared to the strategic value of electricity in the early 20th century, when electrification transformed industries like manufacturing and created new ones like mass communications. "AI is strategic because the scale, scope, complexity and the dynamism in business today is so extreme that humans can no longer manage it without artificial intelligence," Chris Baum, partner and director at Bain & Company, told TechTarget.

AI's biggest impact on business in the near future stems from its ability to automate and augment jobs that today are done by humans.

Labor gains realized from using AI are expected to expand upon and surpass those made by current workplace automation tools. And by analyzing vast volumes of data, AI won't simply automate work tasks, but will generate the most efficient way to complete a task and adjust workflows on the fly as circumstances change.

AI is already augmenting human work in many fields, from assisting doctors in medical diagnoses to helping call center workers deal more effectively with customer queries and complaints. In security, AI is being used to automatically respond to cybersecurity threats and prioritize those that need human attention. Banks are using AI to speed up and support loan processing and to ensure compliance. (See section "Current and potential use cases.")

But AI will also eliminate many jobs done today by humans -- a major concern to workers, as described in the following sections on benefits and risks of AI.

Benefits of AI in the enterprise

Most companies at this juncture are looking to use AI to optimize existing operations rather than radically transform their business models. The aforementioned gains in productivity and efficiency are the most cited benefits of implementing AI in the enterprise.

Here are five additional key benefits of AI for business:

Improved customer service. The ability of AI to speed up and personalize customer service is among the top benefits businesses expect to reap from AI, ranked No. 2 among AI payoffs in a 2019 research study by MIT Sloan Management and Boston Consulting Group.

Improved monitoring. AI's capacity to process data in real time means organizations can implement near-instantaneous monitoring; for example, factory floors are using image recognition software and machine learning models in quality control processes to monitor production and flag problems.

Faster product development. AI enables shorter development cycles and reduces the time between design and commercialization for a quicker ROI of development dollars.

Better quality. Organizations expect a reduction in errors and increased adherence to compliance standards by using AI on tasks previously done manually or done with traditional automation tools, such as extract, transform and load. Financial reconciliation is an area where machine learning, for example, has substantially reduced costs, time and errors.

Business model innovation and expansion. Digital natives like Amazon, Airbnb, Uber and others certainly have used AI to help implement new business models. Traditional companies may find AI-enabled business model transformation a hard sell, according to Andrew Ng, a pioneer of AI development at Google and Baidu and currently CEO and co-founder of Landing AI. He offered a six-step playbook for getting AI off the ground at traditional companies.

AI risks

One of the biggest risks to the effective use of AI in the enterprise is worker mistrust. Many employees fear and distrust AI or remain unconvinced of its value in the workplace.

Anxieties about job elimination are not unfounded, according to many studies. A report from the Brookings Institute, "Automation and Artificial Intelligence: How Machines Are Affecting People and Places," estimated that some 36 million jobs "face high exposure to automation" in the next decade. The jobs most vulnerable to elimination are in office administration, production, transportation and food preparation, but the study found that by 2030, virtually every occupation will be affected to some degree by AI-enabled automation. (Gartner has stated that in 2020, AI will be a net-positive job motivator, eliminating 1.8 million jobs while creating 2.3 million jobs.)

Of more immediate concern is the prevailing skepticism about AI's value in the workplace: 42% of IT and business executives surveyed do not "fully understand the AI benefits and use in the workplace," according to Gartner's 2019 CIO Agenda survey. Fear of the unknown accounts for some of this skepticism, the report stated, adding that business and IT leaders must take on the challenge of quantifying the benefits of AI to employees. Companies must strive to tie AI to tangible KPIs, such as increased revenue and time saved, as well as train employees in the new skills they will need in an AI-enabled workplace.

Without worker trust, the benefits of AI will come to naught, Beena Ammanath, AI managing director at Deloitte Consulting LLP, told TechTarget in an article on the top AI risks businesses must confront when implementing the technology.

"I've seen cases where the algorithm works perfectly, but the worker isn't trained or motivated to use it," Ammanath said. Consider the example of an AI system on a factory floor that determines when a manufacturing machine must be shut down for maintenance.

"You can build the best AI solution -- it could be 99.998% accurate -- and it could be telling the factory worker to shut off the machine. But if the end user doesn't trust the machine, which isn't unusual, then that AI is a failure," Ammanath said.

As AI models become more complex, explainability -- understanding how an AI reached its conclusion -- will become ever-harder to convey to frontline workers who need to trust the AI to make decisions.

Companies must put users first, according to the experts interviewed in this report on explainable AI techniques: Data scientists should focus on providing the information relevant to the particular domain expert, rather than getting into the weeds of how a model works. For a machine learning model that predicts the risk of a patient being readmitted, for example, the physician may want an explanation of the underlying medical reasons, while a discharge planner may want to know the likelihood of readmission.

Here is a summary of three more big AI risks discussed in the article cited above:

AI errors. While AI can eliminate human error, problematic data, poor training data or mistakes in the algorithms can lead to AI errors. And those errors can be dangerously compounded because of the large volume of transactions AI systems typically process. "Humans might make 30 mistakes in a day, but a bot handling millions of transactions a day magnifies any error," Bain & Company's Brahm said.

Unethical and unintended practices. Companies need to guard against unethical AI. Enterprise leaders are likely familiar with the reports on the racial bias baked into the AI-based risk prediction tools used by some judges to sentence criminals and decide bail. Companies must also be on the alert for unintended consequences of using AI to make business decisions. An example is a grocery chain that uses AI to determine pricing based on competition from other grocers. In poor neighborhoods where there is little or no competition, the logical recommendation might be to charge more for food, but is this the strategy the grocery chain intends?

Erosion of key skills. This is a rarely considered but not unimportant risk of AI. In the wake of the two plane crashes involving Boeing 737 Max jets, some experts expressed concern that pilots were losing basic flying skills -- or at least the ability to employ them -- as the jet relied on increasing amounts of AI in the cockpit. Albeit extreme cases, these events should raise questions about key skills enterprises might want to preserve in their human workforce as AI's use expands, Brahm said.

Current and potential use cases

A Google search for AI use cases turns up millions of results, an indication of the many ways in which AI is applied in the enterprise -- or at least can be applied (see section "Adoption in the enterprise"). AI use cases span industries from financial services -- an early adopter -- to healthcare, education, marketing and retail. AI has made its way into every business department, from marketing, finance and HR to IT and business operations. Additionally, the use cases incorporate a range of AI applications. Among them: natural language generation tools used in customer service, deep learning platforms used in automated driving, and biometric identifiers used by law enforcement.

Here is a sampling of current AI use cases in multiple industries and business departments with links to the TechTarget articles that explain each one in depth.

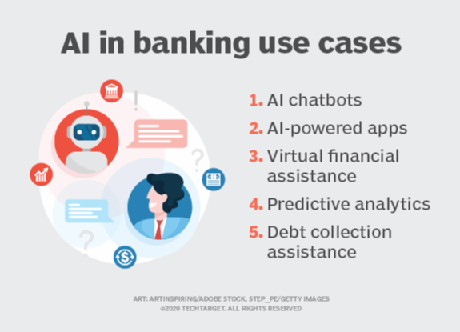

Financial services. Artificial intelligence is transforming how banks operate and how customers bank. Read about how Chase Bank, JPMorgan Chase, Bank of America, Wells Fargo and other banking behemoths are using AI to automate customer service and streamline back office processes in this in-depth feature "AI use cases in banking create opportunities, improve systems."

Manufacturing. Collaborative robots, aka cobots, are working on assembly lines and in warehouses alongside humans, functioning as an extra set of hands; factories are using AI to predict maintenance needs; machine learning algorithms detect buying habits to predict product demand for production planning. Read about these AI implementations and potential new AI applications in "10 AI use cases in manufacturing."

Agriculture. The $5 trillion agriculture industry is using AI to yield healthier crops, reduce workloads and organize data. Read Cognilytica analyst Kathleen Walch's deep dive into agriculture's use of AI technology and about John Deere's AI journey.

Law. The document-intensive legal industry is using AI to save time and improve client service. Law firms are deploying machine learning to mine data and predict outcomes; they are also using computer vision to classify and extract information from documents and natural language processing to interpret requests for information. Watch Vince DiMascio talk about how his team is applying AI and robotic process automation at the large immigration law firm where he serves as CIO and CTO.

Education. In addition to automating the tedious process of grading exams, AI is being used to assess students and adapt curricula to their needs. Listen to this podcast with Ken Koedinger, professor of human-computer interaction and psychology at Carnegie Mellon University's School of Computer Science, on how machine learning is providing insight into how humans learn and paving the way for personalized learning.

IT service management. IT organizations are using natural language processing to automate user requests for IT services. They are applying machine learning to ITSM data to gain a richer understanding of their infrastructure and processes. Dig deeper into how organizations are using AI to optimize IT services in "10 AI and machine learning use cases in ITSM."

Evolution of AI use cases

The range of AI use cases in the enterprise is not only expanding but also evolving in the face of current market uncertainty. As companies grapple with the implications of the coronavirus pandemic and fallout, AI will help companies understand how they need to pivot to stay relevant and profitable, said Arijit Sengupta, CEO of Aible, an AI platform provider.

"In the current COVID-19 emergency, the most significant use cases are going to center on scenario planning, hypothesis testing and assumption testing," Sengupta told TechTarget.

But model building will have to become nimbler and more iterative. Predictive models, for example, could be pushed out to salespeople, who in turn become critical players in feeding the models real-time empirical data for ongoing analysis. "For any use case, getting end-user feedback is critical in uncertain times, because end users will know things that haven't surfaced in the data yet," Sengupta said.

For a look at how AI use cases are evolving in eight areas, read reporter George Lawton's informative article, "AI uses cases in the enterprise undergoing rapid change."

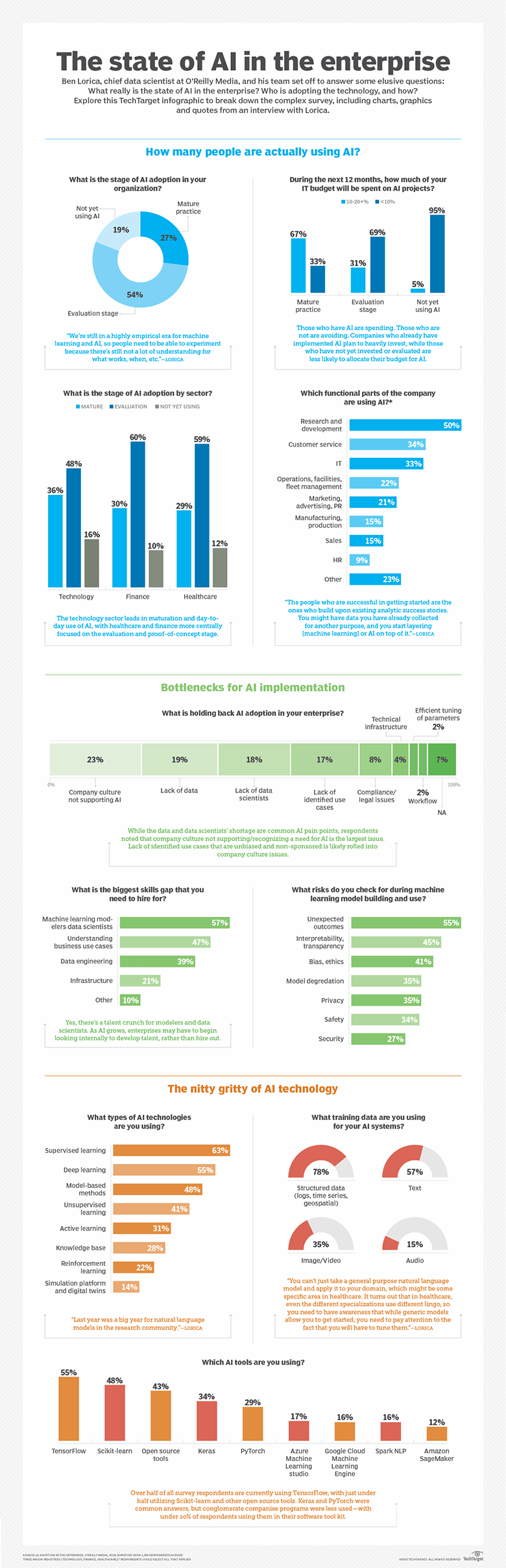

Adoption in the enterprise

Recent studies on AI adoption in the enterprise assert that AI deployments are rising. By 2022, for example, Gartner projected that the average number of AI projects per company will grow to 35, a 250% increase over the average of 10 projects projected for 2020. But whether the growth in AI adoption is as strong as anticipated or will pan out as predicted is open to debate. As Gartner noted, a 10 percentage point gain in enterprise AI adoption to 14% in 2019 from 4% the previous year fell well short of the firm's projected 21-point increase. What is clear is that companies still face many challenges in deploying AI.

Companies that have deployed AI are realizing that figuring out how to do AI is not the same as using AI to make money. As Lawton chronicled in an in-depth report on "last-mile" delivery problems in AI, businesses are finding it much harder to weave AI technologies into existing business processes than to build or buy the complex AI models that promise to optimize those processes. Ian Ziao, manager at Deloitte Omnia AI, estimated that most companies deploy between 10% and 40% of their machine learning projects. Among the challenges holding back companies from integrating AI into their business processes are the following:

lack of the last-mile infrastructure -- such as robotic process automation, integration platform as a service and low-code platforms needed to connect AI into the business;

lack of domain experts who can assess what AI is good for, specifically figuring out which decision-making elements of a process can be automated by AI and how to then reengineer the process; and

lack of feedback on machine learning models, which need to be updated to reflect new data.

Industry best practices for deploying AI, however, are emerging, as described in a TechTarget article on criteria for success in AI, highlighting advice from practitioners from Shell and Uber, among others, in charge of big AI initiatives. The most critical factor cited by all the data scientists profiled was the need to work closely with the company's subject matter experts. People with in-depth knowledge of the subject matter, they stressed, provide the context and nuance that are hard for deep learning tools to tease apart on their own.

Artificial intelligence trends: Giant chips, new CPUs, neuro-symbolic AI

It is hard to overestimate how much development is being done on artificial intelligence by vendors, governments and research institutions -- and how quickly the field is changing. On the hardware side, startups are developing new and different ways of organizing memory, compute and networking that could reshape the way leading enterprises design and deploy AI algorithms. At least one vendor has begun testing a single chip about the size of an iPad that, as detailed in this report on AI giant chips, can move data around thousands of times faster than existing AI chips.

Even accepted wisdom -- such as the superiority of GPUs vs. CPUs for running AI workloads -- is being challenged by leading AI researchers. A team at Rice University, for example, is working on a new category of algorithms, called SLIDE (Sub-LInear Deep learning Engine), that promises to make CPUs practical for more types of algorithms. CPUs, of course, are commodity hardware -- present everywhere and cheap compared to GPUs. "If we can design algorithms like SLIDE that can run AI directly on CPUs efficiently, that could be a game changer," Anshumali Shrivastava, assistant professor in the department of computer science at Rice, told TechTarget.

On the software side, scientists in academia and industry are pushing the limits of current applications of artificial intelligence. In the feverish quest to develop sentient machines that rival human general intelligence, traditional antagonists in AI are burying the hatchet.

Proponents of symbolic AI -- methods that are based on high-level symbolic representations of problems, logic and search -- are joining forces with proponents of data-intensive neural networks to develop AI that is good at both capturing compositional and causal structure (symbolic AI) and capable of the image recognition and natural language processing feats performed by deep neural networks. This neuro-symbolic approach would allow machines to reason about what they see, representing a milestone in the evolution of AI.

"Neuro-symbolic modeling is one of the most exciting areas in AI right now," Brenden Lake, assistant professor of psychology and data science at New York University, told TechTarget. Read our feature story on this groundbreaking research and its implications for the future of AI.

Source: paper.li

Comments