Directus with MSSQL and Docker

- The Tech Platform

- Feb 9, 2022

- 5 min read

What is Directus? Directus is an open source data platform, which enables anyone in an organization — from developers to data scientists to business users — to access and manage the database content that powers all your applications and digital experiences. For developers, a data connection toolkit provides a dynamic REST+GraphQL API, JavaScript SDK, and options for authorization, caching, and more. It is written entirely in JavaScript (primarily Node.js and Vue.js )

Key Features of Directus:

No-Code App for anyone to manage data

Data Connection Toolkit with REST & GraphQL

Supports Any SQL Database

Authorization & Caching

Completely Free & Open Source

Let’s get our hands dirty and see, how can we use Directus as a backend API for any application.

Things we are going to use

Docker Desktop

Docker Compose

VS Code (you can use any other editor)

If you don’t have docker desktop, please follow below instructions,

Docker Desktop for Mac and Windows | Docker

www.docker.com

To install docker compose , follow below instructions,

Install Docker Compose

docs.docker.com

Brief: So today we will be creating an REST and GraphQL API for backend of our Northwind Application using Directus. So basically, Directus will provide an layer on top of SQL Server, by using Directus we can query and fetch the data and also using the Directus UI (no-code app) , we can update and visualize the data.

Directus Architecture

How to install and run Directus with MSSQL in Docker? So we will create two containers, one is for our SQL Server and another is with the Directus Image. In this case we will create an Custom SQL Server image to create the Database at the initialization and once the DB is created we will initialize the directus with those credentials and the new DB.

We will use Docker Compose to deploy these two containers to local docker environment.

Few things first, our code repository will look like this,

It will contain five files,

1. configure-db.sh → This is to run the DB initialization scripts for SQL Server which is present in setup.sql. It will create the DB and new login, which will be used in Directus.

#!/bin/bash

sleep 90

# wait for MSSQL server to start

export STATUS=1

i=0

while [[ $STATUS-ne 0 ]] && [[ $i-lt 30 ]];do

i=$i+1

/opt/mssql-tools/bin/sqlcmd -t 1 -U sa -P $SA_PASSWORD -Q "select 1">> /dev/null

STATUS=$?

done

if [ $STATUS-ne 0 ];then

echo"Error: MSSQL SERVER took more than thirty seconds to start up."

exit 1

fi

echo"======= MSSQL SERVER STARTED ========"| tee -a ./config.log

# Run the setup script to create the DB and the schema in the DB

/opt/mssql-tools/bin/sqlcmd -S localhost -U sa -P $SA_PASSWORD -d master -i setup.sql

echo"======= MSSQL CONFIG COMPLETE ======="| tee -a ./config.log2. entrypoint.sh → This is used to start the SQL Server and execute configure-db.sh shell-script.

#!/bin/bash

# Start SQL Server

/opt/mssql/bin/sqlservr &

# Start the script to create the DB and user

/usr/config/configure-db.sh

# Call extra command

eval$13. Dockerfile → This is used to create Custom Image for SQL Server, which will execute entrypoint.sh on container Startup.

FROM mcr.microsoft.com/mssql/server:2017-CU24-ubuntu-16.04

USER root

# Create a config directory

RUN mkdir -p /usr/config

WORKDIR /usr/config

# Bundle config source

COPY . /usr/config

# Grant permissions for to our scripts to be executable

RUN chmod +x /usr/config/entrypoint.sh

RUN chmod +x /usr/config/configure-db.sh

ENTRYPOINT ["/usr/config/entrypoint.sh"]

# Tail the setup logs to trap the process

CMD ["tail -f /dev/null"]

HEALTHCHECK --interval=15s CMD /opt/mssql-tools/bin/sqlcmd -U sa -P $SA_PASSWORD -Q "select 1" && grep -q "MSSQL CONFIG COMPLETE" ./config.log

4. setup.sql → This contains SQL Scripts which will execute during container creation and create new Database with some data and also new SQL Server Login. We are going to use old northwind database scripts provided by microsoft.(https://github.com/microsoft/sql-server-samples/blob/master/samples/databases/northwind-pubs/instnwnd.sql)

setup.sql

5. docker-compose.yml → This is the main component, using this file we are going to deploy our services in local docker environment.

version: '3.4'

services:

sqlserverdirectus:

container_name: sqlserverdirectus

image: ${DOCKER_REGISTRY-}gringottsbanksqlserver

build:

context: .

dockerfile: Dockerfile

environment:

ACCEPT_EULA: Y

SA_PASSWORD: 2astazeY

MSSQL_DB: Northwind #Custom Image Environment Variable

MSSQL_USER: directus_db_user #Custom Image Environment

Variable

MSSQL_PASSWORD: directus_db_p@ssw0rd #Custom Image Environment

Variable

ports:

- 1434:1433

healthcheck:

test: ["CMD-SHELL", "/opt/mssql-tools/bin/sqlcmd -S localhost

-U $$MSSQL_USER -P $$MSSQL_PASSWORD -Q 'SELECT 1' "]

interval: 10s

retries: 10

start_period: 10s

timeout: 3s

directus:

container_name: directus

image: directus/directus:latest #Directus image

ports:

- 8055:8055

depends_on:

sqlserverdirectus:

condition: service_healthy

environment:

KEY: '255d861b-5ea1-5996-9aa3-922530ec40b1'

SECRET: '6116487b-cda1-52c2-b5b5-c8022c45e263'

DB_CLIENT: 'mssql'#DB Client Type you can use other type of

clients

DB_HOST: 'host.docker.internal'# host name of the DB

container, using host.docker.internal, you can use container

name

DB_PORT: '1434'#port on which DB Container is listening

DB_DATABASE: 'Northwind'#Database name

DB_USER: 'directus_db_user'#Newly created Database User

DB_PASSWORD: 'directus_db_p@ssw0rd'#Newly Created database

password

#If caching needs to be done, add another redis conatiner in

the services

#CACHE_ENABLED: 'true'

#CACHE_STORE: 'redis'

#CACHE_REDIS: 'redis://cache:6379'

ADMIN_EMAIL: 'admin@admin.com'#used for Login for Directus UI

ADMIN_PASSWORD: 'admin'

#used for Login for Directus UI

# Make sure to set this in production#Now we are ready to start our application.

To Start and build the containers go to Terminal →New Terminal Option in VS Code, it will open a Terminal and execute the below command.

docker-compose up -dOutput will look like below,

docker-compose up -d

Verify the by checking the Docker Desktop, there should be directus under Container/Apps , if you expand, you can see there should be two containers , 1. sqlserverdirectus 2. directus

Docker Desktop

Now go to this link, http://localhost:8055/ if deployment is successful, we can see the login the page in Directus UI like below.

So we are done with our Deployment and installation, now in next Part we will see how to use the API.

How to check contents in Directus UI and use the API?

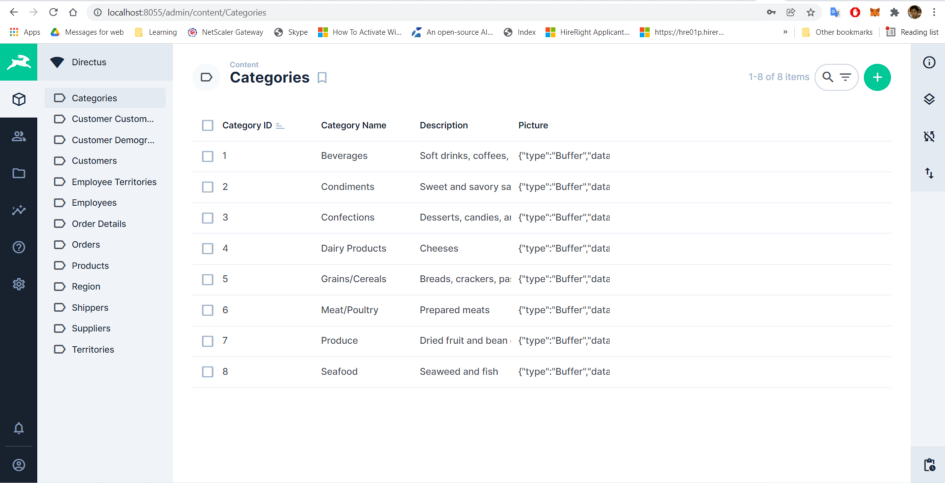

1. Login to Directus UI using credentials set in docker-compose.yml file. It will look like below, you can explore all the collections(tables and the data) available. You can add new data to collection and can update old existing data.

Edit Item in collections

2. Now lets set up public access for the API for our testing else you can try to access the API by adding Authorization header for the bearer token. To Set up the public access, go to Settings → Roles & Permissions → Public → Select any one of the collection i.e. Categories and Select All Access for all the types (Create, Read, Update, Delete, Share)

Public Access for Categories

Now Try in Postman to access the API, you should be able to get the data without Authorization header,

3. Now lets try accessing some collections which is not public i.e. Customers with out any Authorization Header.

/items/Customers without any Authorization Header

Now try to get the Token with the admin credentials used in the docker-compose.yml

/auth/login to get token

Now add that Token as Authorization Header (Bearer <token>) and try to get customers.

/items/Customers with Authorization Header (Bearer <token>)

This is working. We will be able to fetch data.

This way Directus exposed an secure API Endpoint with customizable Role Based Access out of the box. There is no need to build a separate Microservice for CRUD operations and There is also no need to Create a Separate UI to Maintain the Data. Both can be taken care by Directus.

How to use GraphQL Endpoint?

We can use this /graphql endpoint to query the API and get only the required data.

/graphql

You can also use, https://graphiql-online.com/ to query GraphQL endpoint.

Source: Medium - Arkaprava Sinha

The Tech Platform

Comments