Twitter Project- Viral Tweets using K-Nearest Neighbour

- The Tech Platform

- Oct 6, 2020

- 4 min read

Tired of writing good and creative tweets but they don’t go viral. Wondering what you can do to achieve Trump’s tweeting skills. Well this project can’t help you in that. Here, we will use K-Nearest Neighbour algorithm to predict whether someone’s tweet will go viral based on various features associated to the tweet. This project was part of my course at Codecademy, the coolest website for beginners. Let's dive in.

What is K-Nearest Neighbour algorithm?

KNN or K-Nearest Neighbour works on the basic assumption that similar data points lie close to each other. It is used both in classification and regression. This algorithm classifies data by calculating distances between various data points and grouping them together which have the least distance among them in that group.

Food for thought

Before get into direct predictions let’s think about what all features a tweet can have that will make it go viral. Could it be the number of hashtags it has or could it be number of friends the person has. It could also be the link that is there in that tweet or something in the language of tweet itself makes it go viral.

Explore the data-set

We will begin by exploring the various features in data-set and the data-points. On exploration we found that some of the features in our data are in form of dictionary, one example is user dictionary.

import pandas as pd

all_tweets = pd.read_json("random_tweets.json", lines=True)

print(len(all_tweets))

print(all_tweets.columns)

print(all_tweets.loc[0]['text'])

print(all_tweets.loc[0]['user'].keys())

print(all_tweets.info())On running above code and loading data from JavaScript Object Notation A.K.A json file we were able to analyze the data and saw that there are 11,099 data points and 31 features out of which few are dictionaries. The data has been collected between 2018–07–31 13:34:13 to 2018–07–31 13:34:40.

Define Viral Tweets

Now that we have a rough idea of our data set next step for us is to define which tweets are viral according to us. Wondering why do we need this, well the reason is simple, KNN classifier is a supervised machine learning algorithm and it needs labels to know what data means and how to group them. Why are we making it? Simple reason, it is not given to us in the data set.

I will start by creating a column which is_viral in my data set and for this project I have considered that if a tweet has been retweeted 1000 times it is a viral tweet. The following code will do the job for us using

numpy.where()

import numpy as np

all_tweets['is_viral'] = np.where(all_tweets['retweet_count'] > 1000, 1, 0)

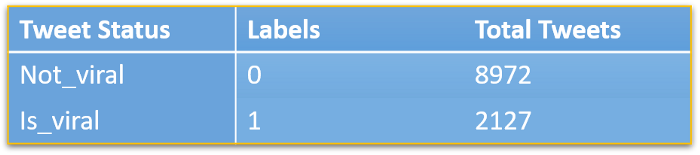

all_tweets['is_viral'].value_counts()Output Tweet Status, Source: OriginalFeature Engineering

Next we will find the features that we think are relevant for our analysis. As I mentioned earlier one can think of the things that might influence whether a tweet will go viral or not. I have considered tweet_length, hashtag_count, word_count, average_word_len, followers_count, friends_count and http_count as my features for training my model.

all_tweets['tweet_length'] = all_tweets.apply(lambda tweet: len(tweet['text']), axis=1)

all_tweets['hashtags_count'] = all_tweets.apply(lambda tweet: tweet['text'].count('#'), axis = 1)

all_tweets['followers_count'] = [all_tweets.loc[i]['user']['followers_count'] for i in range(len(all_tweets))]

all_tweets['friends_count'] = [all_tweets.loc[i]['user']['friends_count'] for i in range(len(all_tweets))]

all_tweets['http_count'] = all_tweets.apply(lambda tweet: tweet['text'].count('http'), axis = 1)

all_tweets['word_count'] = all_tweets.apply(lambda tweet: len(tweet['text'].split()), axis = 1)

all_tweets['average_word_len'] = all_tweets['tweet_length']*1.0 / all_tweets['text_count']Normalizing Data

Now we will normalize our data by first assigning to variable named data and using sklearn.preprocessing.scale this will scale our data and make sure we take care of outliers as well as bring all data to an even level which makes algorithm more fast. Why it happens is out of the scope of this blog. That’s what professor tells in class isn’t it.

from sklearn.preprocessing import scale

data = all_tweets[['tweet_length','hashtags_count','followers_count','friends_count','http_count','word_count','average_word_len']]

labels = all_tweets['is_viral']Train Test Split

All ready to train model and make predictions. Hold on to your horses. We have one last step before we dive into teaching our terminator. We need to split the data into 80/20, i.e. 80% for training and 20% for testing. sklearn.model_selection.train_test_split should do the job.

from sklearn.preprocessing import scale

data = all_tweets[['tweet_length','hashtags_count','followers_count','friends_count','http_count','word_count','average_word_len']]

labels = all_tweets['is_viral']KNN Classifier

Phew that was some hard work getting data ready for training. Now we will train our model, test our model and check for accuracy. Then we will change the k number of neighbors and run our model again to see which k suits our case the best for a balanced train and test accuracy. Remember there is under-fitting and over-fitting which can happen on choosing too high or two low k values.

from sklearn.neighbors import KNeighborsClassifierclassifier = KNeighbors

Classifier(n_neighbors = 5)

classifier.fit(train_data,train_labels)

classifier.score(test_data,test_labels)The above code will print accuracy of our model. Which, in our case is coming out to be 79.96% for a k value of 5.

Optimal K

We will now experiment with different K and plot them on a graph to see which value of k gives optimal accuracy. In our case it turns out to be k = 6. Below code will plot graph vs accuracy for 100 values of k.

from sklearn.neighbors import KNeighborsClassifier

import seaborn as sn

score = []

for k in range(1,100):

classifier = KNeighborsClassifier(n_neighbors = k)

classifier.fit(train_data,train_labels)

score.append(classifier.score(test_data,test_labels))

sn.lineplot(range(1,100),score)Great! You made it to the end. Now we know that how KNN works.

Check Twitter Project- Classification using Naive Bayes Classifier to learn about another classification algorithm which is used for classifying text and even used in email classification.

Source: Medium.com

Comments