Office365 SharePoint: Validate & Transform XML Data from Enterprise Systems into SharePoint using Az

- Nakkeeran Natarajan

- Mar 20, 2019

- 3 min read

Updated: Mar 29, 2019

In this article, you will understand how the XML content from enterprise systems is first validated and converted/transformed to JSON, and pushed to Office 365 SharePoint online lists. We will be leveraging the power of Azure services, to achieve everything with just configurations.

The services used under Azure will be

Logics App

a. The entire flow will be set here.

Integration account.

a. With in integration account, we will store the schema and map files to validate and transform the data.

In my previous article, you have seen how the JSON content from enterprise systems is flowing into SharePoint online. There, we have not focused on validating the JSON data, since HTTP trigger by default validates.

We have seen the introduction to the features being leveraged in the previous article. Let us get directly into the business scenarios.

Business Scenario

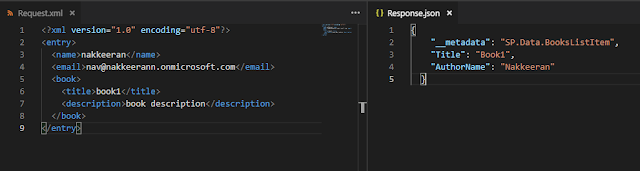

Assume an enterprise system exposes XML data to multiple systems. We will consider source system to be HTTP service (for our POC) and the target system to be SharePoint. The XML data from source system cannot be directly feed into SharePoint. For this purpose, we need to transform the XML content, into the required format. The following picture depicts the transformation. The data being transformed is a SharePoint list item entry, along with metadata properties required for the list item.

Let us start building the entire flow, to move the XML data from enterprise (HTTP service) into SharePoint.

Validate & Transform:

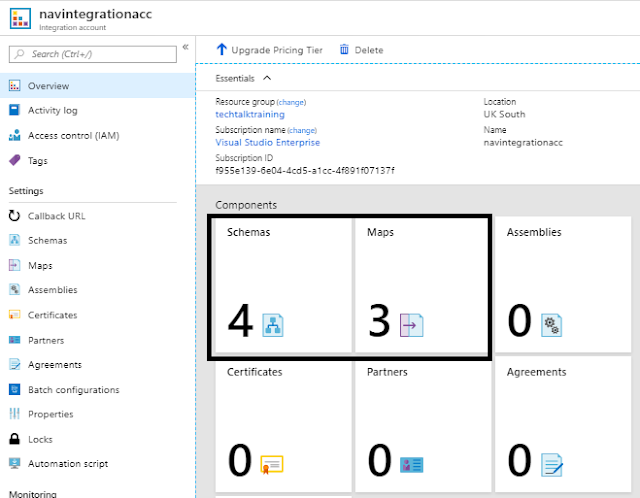

Navigate to Azure Portal, and create a integration account service as explained in the previous article.

The following snapshot shows the components that is being used for validation (schema component) and transformation (map component).

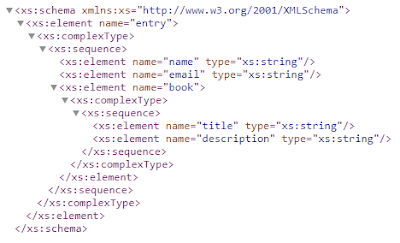

1. Schema component for Input Validations: From the azure integration account, and select the schema component. Configure the schema file, by clicking add option. Provide the name and schema file of the XML source data.

This component help storing the schema files for validating the XML inputs from source systems. The following snippet shows the schema file for the source XML data shown above. Store the following snapshot content as .xsd file and add it to schema component.

So, this helps validating the system sends the data in the right form into target.

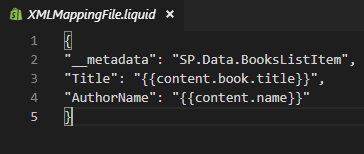

2. Map Component for Data Transformations: The XML data validated could be easily transformed into the required formats here. In our case, XML data will be transformed into JSON. We are using liquid templates to transform from one form into another. The following snapshot shows liquid template content, to convert into entries compatible of list items.

Azure Logics App Flow

Create a Azure Logics App service, and configure the integration account to the one created above. These steps are explained in the previous article.

The following steps explains the flow configurations. The data to be transformed from HTTP service (enterprise system) into Office 365 SharePoint list.

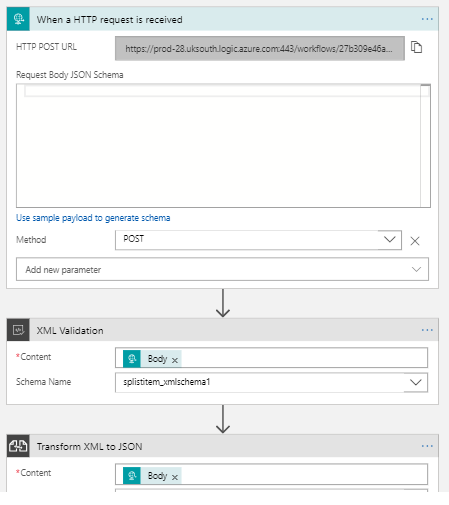

HTTP request is used as a trigger to initiate the data post request, (This could replaced with any other system request endpoint, like Azure Functions, etc. For our better understanding, we have chosen the HTTP request service).

Create "When a HTTP request is received" as a trigger.

Request Body JSON schema is not required, since our input will be in XML format. This validation is done in the next step.

Post method is chosen for posting data.

Once saved, the HTTP URL will be generated, which could be used from postman tools for triggering flows.

Validation & Mapping actions are configured. The input files (schema & map) stored on the integration account, will be mapped for validation and transferring of data.

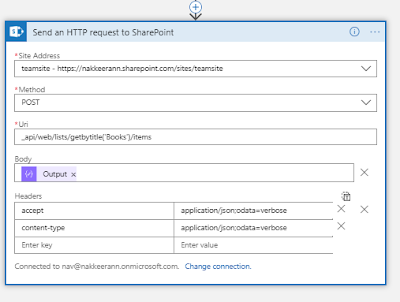

Send HTTP request to SharePoint action is used to create the data on SharePoint list. The input could be the JSON data transformed above. (This could have been done by simply choosing create item action. But for our requirement scenario, we have chosen the REST endpoint request action)

Test the flow using the tools like postman for the flow from HTTP endpoint to SharePoint list. (This step is again explained in my previous article for your reference).

Comments